When Doing Stopped Being Learning

“What I cannot create, I do not understand.” — Richard Feynman1

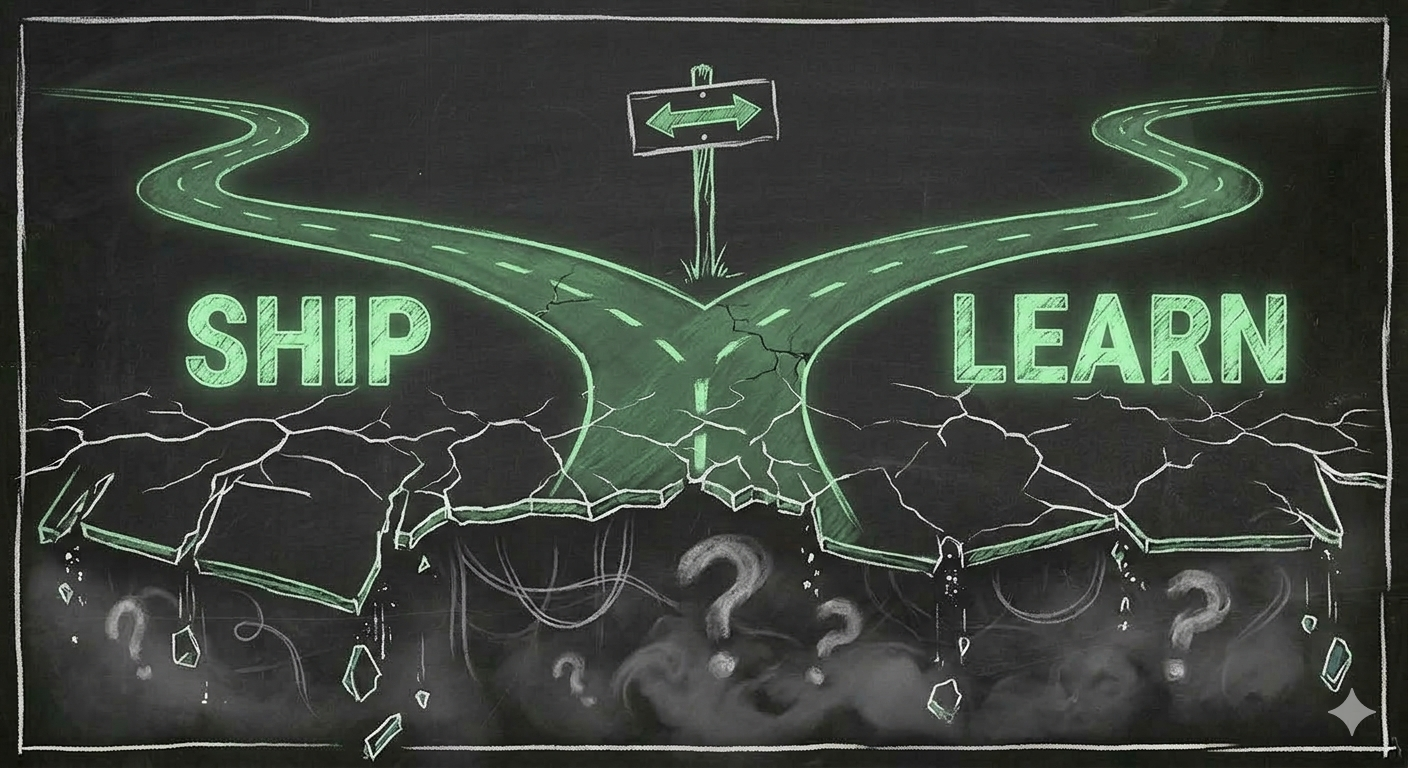

In the age of AI, every knowledge worker faces the same hidden trade-off: use the tool to produce, or use the tool to understand. You cannot fully do both. How you arbitrage that tension defines what you become.

I. When doing was learning#

Before AI, the coupling was tight. To ship software, you had to understand it. There was no shortcut. Writing the code was the learning. Debugging was the understanding. The act of production and the act of comprehension were the same act.

A developer building a REST API learned HTTP semantics — not because they wanted to, but because the code wouldn’t work otherwise. An architect designing a distributed system learned consistency models through the pain of getting them wrong. That pain stuck. We process and remember negative events far more deeply than positive ones2 — a failed deploy at 2 a.m. teaches more than a hundred clean releases. The feedback loop was immediate and unforgiving: if you didn’t understand it, it didn’t ship. If it shipped, you understood most of it.

Doing and learning were not aligned by virtue. They were aligned by constraint. The tools were hard enough that competence was a prerequisite for output.

This had a powerful organizational side effect. Managers didn’t need to measure learning. They didn’t need to incentivize understanding. Output was the proof. Velocity and competence were, for all practical purposes, the same signal. Every sprint delivered working software and deeper expertise. The two were bundled — inseparable, invisible, automatic.

II. The decoupling#

AI broke the coupling.

You can now produce without understanding. A user can generate a working app without knowing why it works. They can produce architecture diagrams for systems they’ve never reasoned through. The code compiles. The tests pass. The app works. From far away, the outcome is a working app. Very similar to what a senior engineer would produce.

But nothing was learned. Production and comprehension have split into two separate activities — and often competing ones.

To learn from an AI interaction, you have to slow down. Read the generated code line by line. Ask why this pattern and not that one. Break it deliberately and watch what happens. If you really want to learn, rebuild it yourself one week later to feel where the difficulty actually lives and what you remember of the problems at hand and solutions you used. This takes time. It looks like inefficiency. It is inefficiency — from a productivity perspective.

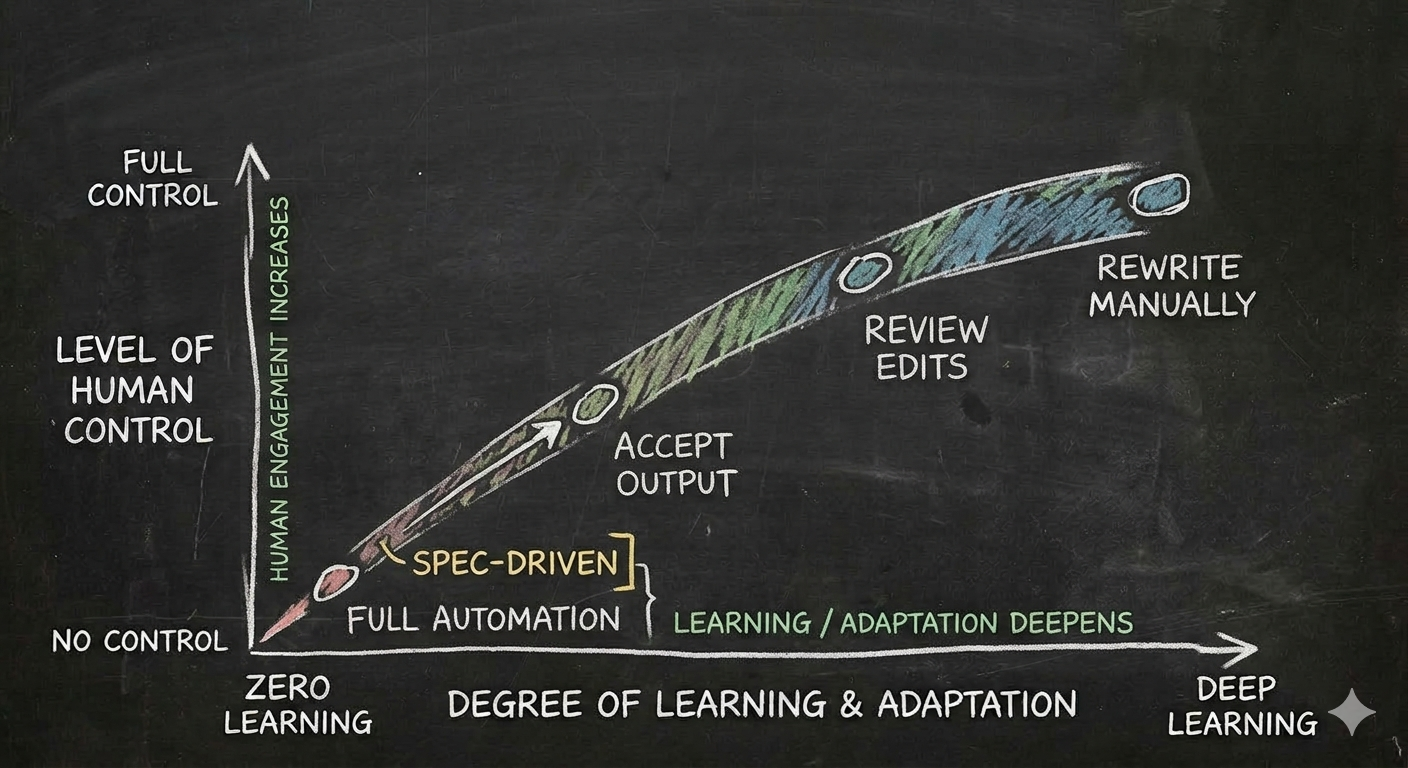

To produce fast, you minimize interaction with the AI: accept the output, verify it works, move on. The less control you keep, the less you learn. At the extreme — spec-driven development, where you describe what you want and the AI builds it — you deliver without understanding anything about how it works. This is fast, smooth, and rewarded by every metric organizations currently track.

The two paths use the same tool. They produce radically different outcomes. And the choice between them is made dozens of times a day, silently, by every knowledge worker with access to an AI assistant.

III. The organizational squeeze#

Organizations are not neutral ground in this arbitrage. They have a thumb on the scale — and it presses hard toward doing.

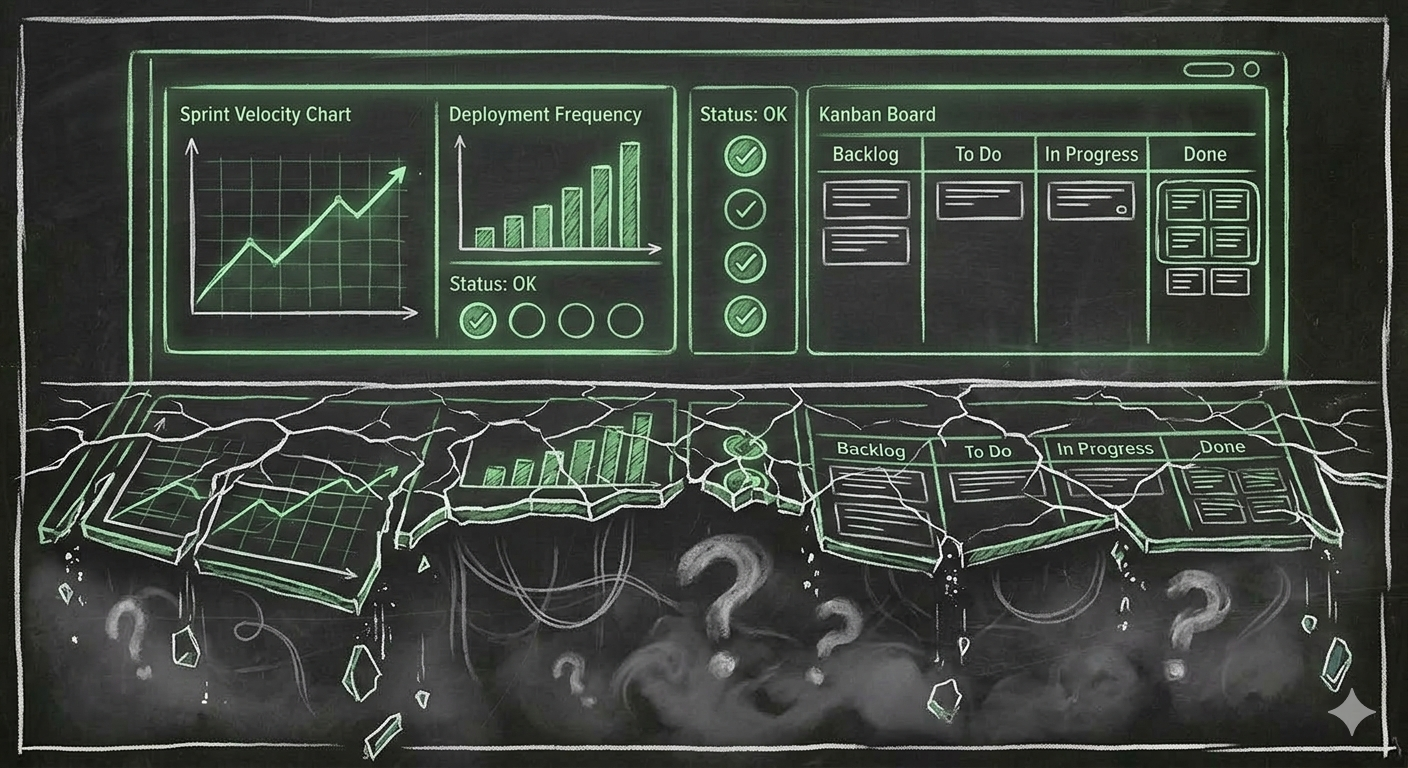

Every incentive structure in a modern software organization — when it considers only speed of delivery — rewards output: velocity, story points, features delivered, deployment frequency. These metrics made sense when doing and learning were coupled. Measuring output was measuring growth. Now it measures only half the equation — and often the wrong half.

A team under delivery pressure will — rationally, predictably — use AI to produce faster. They will accept generated code. They will skip the interrogation. They will hit their sprint goals. Their dashboards will glow green. And their collective understanding of the system they are building will quietly erode, sprint by sprint.

The problem is not that managers are wrong to want delivery. The problem is that the old proxy broke. Doing no longer implies learning. But the metrics haven’t caught up. So organizations optimize for a signal that has been decoupled from what it used to measure — and they have no replacement signal for the thing they actually need.

An organization that pressures teams to deliver is, in the AI era, implicitly pressuring them not to learn. Not by intent. By structure. The trade-off is zero-sum in the short term, and organizations are choosing without knowing they are choosing.

Conversely, an organization that invests in learning — that gives engineers time to interrogate AI output, to rebuild things manually, to sit with confusion — will produce less in any given sprint. The quarterly numbers will be softer. The board deck will be less impressive. But the engineers will actually understand the systems they operate. When the novel problem arrives, when the architecture needs real judgment, when the AI has no good answer — they will have something underneath.

The uncomfortable truth: the organizations that look most productive today may be the ones most aggressively depleting their own expertise. And the ones that look slower may be the ones still building it.

IV. The individual trap#

For the individual, the trade-off is even more personal — and the incentives are even more misaligned.

Using AI to learn is slow, uncomfortable, and invisible. You ask the model to explain. You rewrite the generated code by hand. You deliberately choose the harder path. None of this shows up on a dashboard. Your velocity drops. Your pull requests come later. In a performance review, you look like you are struggling.

Using AI to produce is fast, satisfying, and highly visible. You deliver features ahead of schedule. You take on more work. You look like a top performer. You get promoted.

The individual who chooses learning over doing is making a bet on the long term — with short-term costs that are real and immediate. They are investing in understanding that will compound over years, while their peers are harvesting output that looks better right now. It is the right choice. It is also the punished choice, in most organizations, most of the time.

The ones who will thrive in the AI era are the ones willing to look slower — to question the output, to rebuild what the tool gave them, to correct the AI, to favour interactivity/control over automation/trust. They are willing to sit with not-knowing, with discomfort, with intellectual pain — in order to rewire their brain and learn continuously. They are making a career bet that understanding will matter more than throughput. In an industry that currently worships throughput, this requires conviction.

V. What if the AI doers are right?#

Before prescribing solutions, an honest question: what if this whole argument is wrong?

What if AI-assisted doing is the new learning — just at a different level of abstraction? What if the engineer who delivers ten services in a month learns more about system design, integration patterns, and product thinking than the one who hand-rewrites a single service to understand its internals?

There is something to this. The trade-off is not binary — it is a spectrum. At one end, full automation: you describe what you want, the AI builds it, you understand nothing. At the other end, full control: you write every line, review every decision, learn everything, produce almost nothing. Nobody should live at either extreme.

But the spectrum hides a deeper principle. Neuroscience is clear: we learn deeply when we struggle, fail, and have to recover2. Frictionless success barely registers. The engineer who delivers ten services without friction may accumulate exposure, but exposure is not understanding. Understanding requires wrestling with something that resists you. The smoother the AI makes the process, the less of that friction remains — and with it, the less durable the learning.

There are legitimate cases where skipping the learning is the right call. If you already master a domain — if you have built that kind of service before, if the patterns are familiar — then letting the AI handle it is not ignorance. It is efficiency built on prior understanding. The problem is not using AI to skip what you already know. The problem is using AI to skip what you don’t know and not noticing the difference.

But mastery is not a permanent state — it is a daily deliberate practice3. Concert pianists still play scales. Elite athletes still drill fundamentals. Dancers still warm up at the barre. Chess masters practice openings every day. They all know that any skill left unexercised atrophies. If you delegate all your fundamentals to AI, the mastery you built will quietly erode. You can skip the exercise today because you did it a thousand times before. But if you skip it every day, eventually you cannot do it at all.

This is the question the spectrum really poses: not whether to use AI, but how much control you retain — and whether that control is enough to keep the friction where it counts. If you don’t know the key decisions the AI made for you, this is not deliberate practice, and you will not learn from the experience.

The doers do not learn nothing. They learn less than it feels like they do — they become skilled operators of AI agents. And that gap between perceived learning and actual learning is precisely what makes the choice so dangerous.

In the age of AI, the doers are right that velocity matters. They are wrong that it is sufficient. And the danger is not in moving fast — it is in losing the ability to tell when you have stopped understanding and, more importantly, stopped learning and growing.

VI. Rebalancing the arbitrage#

This choice is not going away. AI will only get better at producing output, which means the temptation to skip understanding will only grow. The question is whether you can build structures — personal and organizational — that resist the default.

For organizations: output metrics are no longer sufficient proxies for capability. Measure how people reason, not just what they deliver. Create explicit time for learning — not as a perk, but as an investment. Reward engineers who can explain why, not just show what. A team that produces slightly less but understands deeply is more resilient than one that delivers fast on borrowed comprehension.

For individuals: treat every AI interaction as a fork. Am I doing or am I learning? Both are legitimate. But if you cannot remember the last time you chose learning, you are drifting. The default — accepting the output, moving on, hitting the deadline — is always doing. Learning requires a deliberate act of friction.

The age of AI has not eliminated the need for understanding. It has made understanding optional in the short term and critical in the long term. The gap between those two timescales is where careers are made or hollowed out. The choice is yours to make. But only if you see it.

Written on Richard Feynman’s blackboard at Caltech at the time of his death, February 15, 1988. Preserved in the Caltech Archives. ↩︎

Baumeister, R.F., Bratslavsky, E., Finkenauer, C., & Vohs, K.D. (2001). “Bad Is Stronger than Good.” Review of General Psychology, 5(4), 323–370. PDF ↩︎ ↩︎

Ericsson, K.A., Krampe, R.T., & Tesch-Römer, C. (1993). “The Role of Deliberate Practice in the Acquisition of Expert Performance.” Psychological Review, 100(3), 363–406. PDF ↩︎