AI: The robustness imperative

This is the third piece in a series. The first, Marx Was Right About AI, examined the leverage knowledge workers hold over AI deployment during the installation period — and why that window is closing. The second, The Efficiency Trap, traced the monetary and ecological consequences of what happens when it closes. This piece asks what comes after: not just what is going wrong, but what a robust alternative looks like — for the worker, the organization, the state, and the planet.

“Living beings are not both high-performing and robust; they are robust because they are not high-performing.”

Original French: “Les êtres vivants ne sont pas performants et robustes, ils sont robustes parce qu’ils ne sont pas performants.”

— Olivier Hamant (RTBF interview, 15 December 2023; consulted 3 September 2024)

In 2011, a plant biologist at ENS Lyon named Olivier Hamant published research that would eventually force a rethinking of one of biology’s most basic assumptions. His subject was how plants respond to mechanical stress — wind, compression, the physical forces that act on growing tissue. The conventional assumption was that stress was a problem to be minimized, and that organisms shielded from stress would grow better. Hamant’s findings pointed in the opposite direction.

Plants subjected to regular mechanical perturbation grew shorter, denser, and mechanically stronger. Their cellular architecture reorganized in response to the stress, building reinforcement precisely where the forces were greatest. Plants shielded from all perturbation grew tall and structurally fragile — optimized for height in a windless environment, catastrophically exposed the moment conditions changed. The stress was not the enemy of function. It was the information that built function. Remove it and you produce a system that performs well under known conditions and collapses under novel ones.

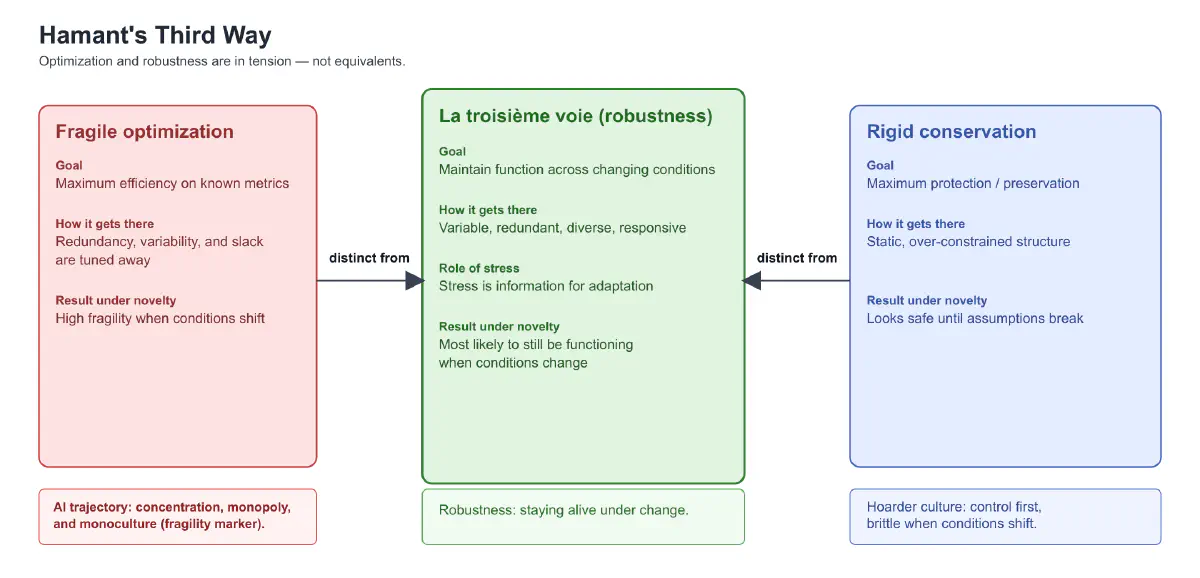

From this research, developed over the following decade and synthesized in La Troisième Voie du Vivant (2022), Hamant drew a conclusion that extends far beyond plant biology. Living systems, over billions of years of evolution, did not optimize. They became robust. And optimization and robustness are not the same thing. They are, in fundamental ways, in tension.

An optimized system is maximally efficient under known conditions — and maximally fragile under novel or variable ones. It has tuned away the redundancy, variability, and slack that robustness requires, in pursuit of performance on the metrics that currently matter. A robust system maintains function across a wide range of conditions, including conditions it has never previously encountered. It is not the best performer under any specific set of conditions. It is the system most likely to still be functioning when conditions change.

Hamant calls the choice between these modes the third way — la troisième voie — distinct from both fragile optimization and rigid conservation. Not the most efficient. Not the most protected. Alive: variable, redundant, stressed, responsive, diverse at every scale.

This framework, developed to understand how a plant survives a storm, turns out to be one of the most precise analytical tools available for understanding what is happening to the AI ecosystem right now — what is already genuinely robust in it, what is being systematically destroyed, and what a robust alternative would require.

A note on a related concept before proceeding: Nassim Taleb’s Antifragile (2012) covers adjacent territory and is more widely known in English. The distinction is worth holding: antifragility is the property of systems that gain from disorder — they do not merely survive perturbation but improve because of it. Robustness is the property of systems that maintain function under disorder. Both are preferable to fragility. They are not the same thing. The AI ecosystem needs robustness as a foundation before it can aspire to antifragility. Hamant’s framework is the right starting point because it is grounded in how living systems actually work, not in how financial portfolios can be structured to profit from volatility.

I. The Installation Period and Its Default Logic#

Before reading the AI ecosystem through Hamant’s lens, it is worth placing that ecosystem in its context — because the context explains why the fragility vectors described below are not accidents or poor decisions. They are structural outputs of the productive system operating according to its own logic.

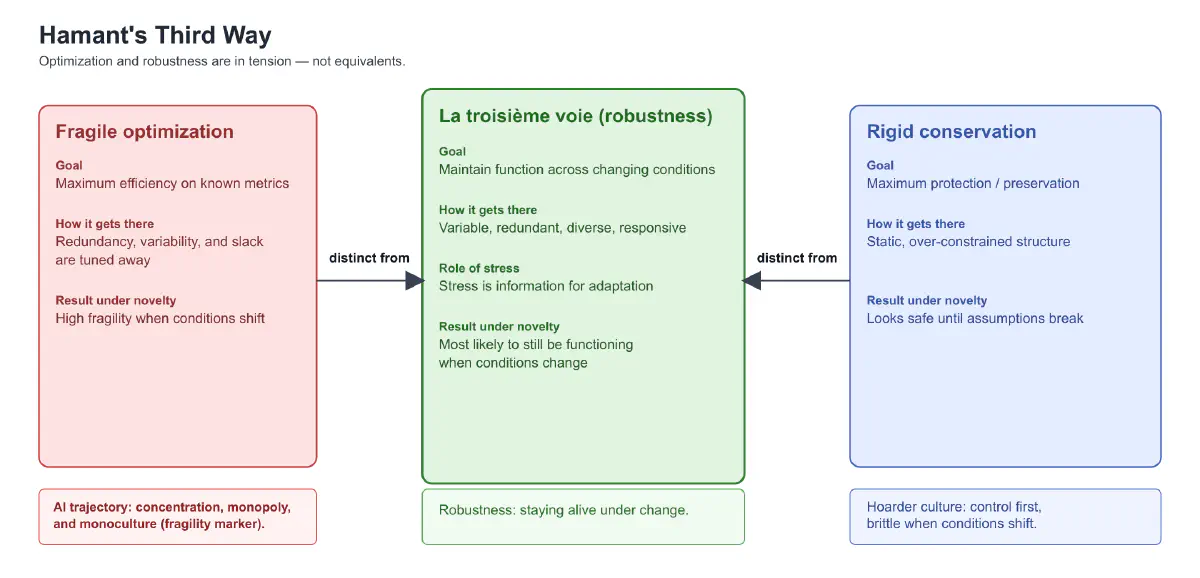

We are in the installation period of the AI technological revolution, in the sense Carlota Perez describes in Technological Revolutions and Financial Capital (2002). Financial capital is driving deployment faster than any institutional framework can adapt. The fixed capital system — models, infrastructure, data centers, GPU supply chains — is compelling adoption at every level regardless of individual or organizational preference. No firm can opt out. The compulsion propagates at the speed of software adoption, not physical installation, which means it is moving faster than any previous technological revolution.

The installation period has a negative relationship with robustness: it destroys it. The optimization pressure of financial capital — extract maximum gain from the current configuration before the next configuration arrives — systematically eliminates the redundancy, diversity, slack, and local autonomy that robustness requires. This is not a choice being made by any specific actor. It is the direct consequence of the installation period. It is caused on one hand by the incredible influx of capital and on the other hand by the uncertainty and disruption caused by new technologies (AI being but an example).

Perez’s historical optimism — that the deployment period will eventually come, that the benefits will diffuse, that an institutional framework will emerge to distribute them — is structurally correct but politically incomplete. The post-war settlement, which was the deployment period of the mass production revolution, did not happen automatically. It was fought for, against the default logic of the productive system, by workers and political movements that built enough pressure to make the extraction politically unsustainable.

The infrastructure choices being made now — during the installation period, before the hinge — determine what the deployment period is built on. A deployment period built on fragile, concentrated, rental-model AI infrastructure serves a narrow group regardless of how much political redistribution accompanies it. The robustness argument is therefore not separate from the political economy argument. It is the infrastructure precondition for a deployment period that is worth fighting for.

II. Reading the AI Ecosystem as a Living System#

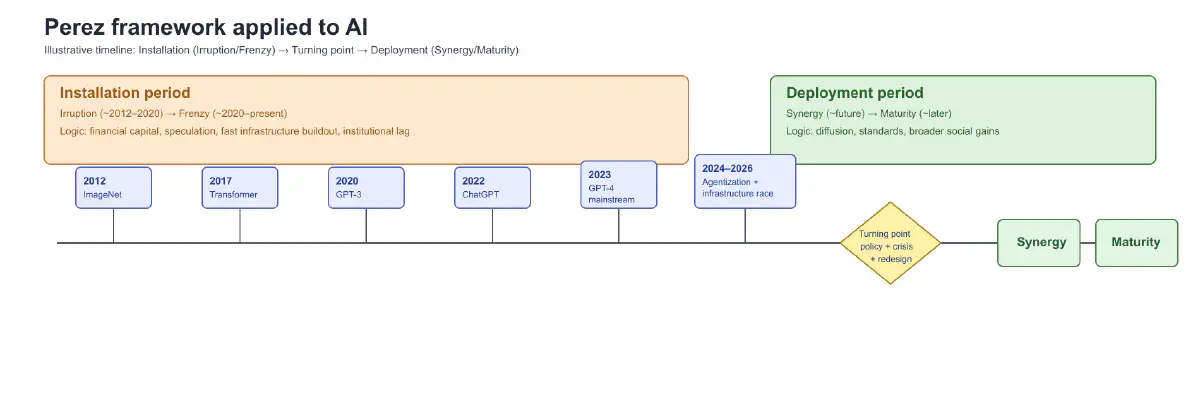

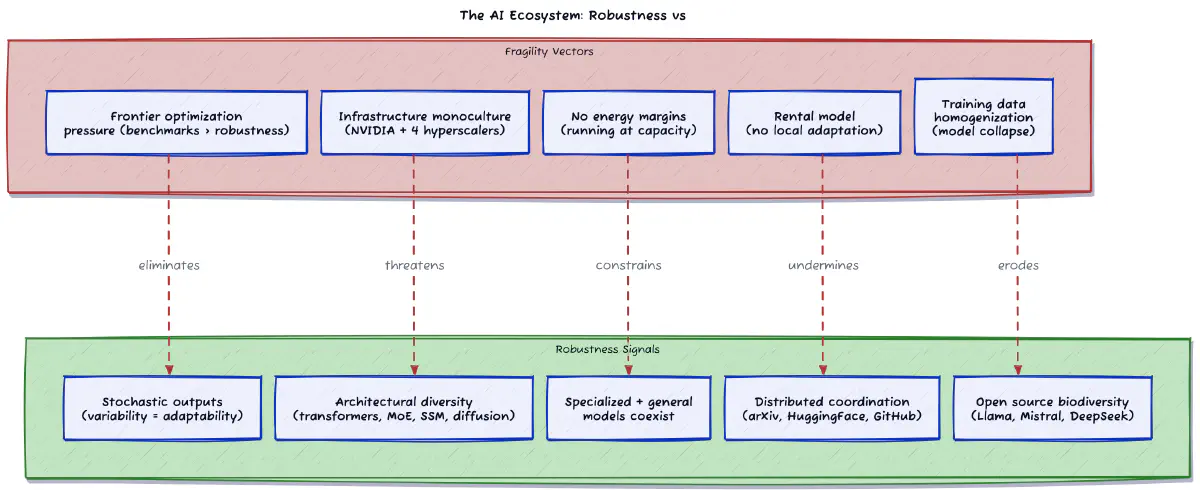

Apply Hamant’s framework to the AI ecosystem as it currently exists, and something surprising emerges: there are genuine robustness signals already present durint the installation period. The ecosystem is not purely fragile. It contains the biological equivalent of a diverse, stressed, variable population — alongside the monoculture pressures that threaten to eliminate it.

Stochasticity as feature. LLM outputs are probabilistic by design. Temperature settings, sampling variability, the fundamental stochastic nature of transformer inference — these are routinely framed as limitations to be engineered away, noise to be reduced in pursuit of consistent, predictable outputs. Through Hamant’s lens they are robustness mechanisms. A deterministic AI is a brittle AI. The variability is not imprecision — it is the raw material of adaptability. The current pressure to make frontier models more consistent and controllable is, from a robustness standpoint, a fragility-increasing intervention dressed as a quality improvement.

Architectural diversity. The AI ecosystem currently contains transformers, mixture-of-experts models, state space models, diffusion models, retrieval-augmented systems, symbolic-neural hybrids, and neuromorphic approaches. The ecosystem has not converged on a single architecture — which is a genuine health signal. Biological ecosystems that converge on a single dominant species become maximally vulnerable to the pathogen or environmental shift that species cannot handle. Architectural diversity in AI is the equivalent of species diversity in an ecosystem: the raw material from which resilience is constructed.

World models and stochastic dynamics. The robustness argument also applies to newer paradigms often associated with Yann LeCun’s “world model” direction (e.g., JEPA-style representation learning): even when learned latent structure appears more stable or predictive than token-level generation, uncertainty does not disappear. It is relocated — into latent variables, environment partial observability, and multi-step prediction error. In other words, “better structure” is not the same as determinism. Robust systems still need to represent uncertainty explicitly rather than suppress it.

Diffusion and reverse-time stochasticity. Diffusion systems make this even clearer. Their generation path is built on a reverse Markov process: sampling starts from noise and iteratively denoises through stochastic transitions (or near- stochastic approximations, depending on sampler choice). This is not accidental implementation detail; it is part of how these models explore solution space. Trying to eliminate that variability entirely would trade adaptability for brittleness.

Specialized versus general models. Frontier generalists and deep domain specialists coexist. Medical models, legal models, code models, vision models, reasoning models alongside general-purpose systems. This is Hamant’s multi-scale diversity — different organisms occupying different niches, the ecosystem more robust for their coexistence than any single dominant species would make it. The general model handles broad tasks. The specialist model handles specific ones better than any generalist can. The ecosystem that maintains both is more robust than the one that converges on a winner.

Emergent coordination without central control. The ecosystem coordinates through multiple overlapping layers — none of them centrally managed. At the research layer: arXiv preprints, shared benchmarks, open datasets, and competitive pressure propagate findings across the global research community without any editorial authority deciding what matters. At the collaboration and distribution layer: HuggingFace functions as the ecosystem’s shared library — the model hub where open weights releases become accessible, the Spaces platform where working demos circulate, the datasets repository where training data is shared and versioned. Git to host the code, the fine-tuning scripts, the inference frameworks, the tooling that turns model weights into deployable capability. Papers With Code bridges research publication and executable implementation, closing the loop between the arXiv layer and the GitHub layer. At the deployment layer: open tools and frameworks like Ollama, llama.cpp, and vLLM make locally runnable or self-hosted models a practical reality rather than a theoretical possibility, distributing the ecosystem’s capability to the edge where cognitive independence actually lives. No one decided that transformers would become dominant. No committee approved the mixture-of-experts architecture. No regulator mandated the open source releases that have shaped the ecosystem’s development. This distributed, signal-based coordination across layers is precisely what Hamant identifies in biological systems as the mechanism that preserves local autonomy while enabling systemic coherence — and it is one of the ecosystem’s most important robustness properties.

Open source as biodiversity layer. Llama, Mistral, Falcon, DeepSeek, Phi, Qwen — these are the wildness that keeps the ecosystem generative. They ensure that capability is not locked into a single lineage, that experimentation continues outside commercial constraints, that the ecosystem has redundant pathways to capability that no single actor controls. This is the single most important robustness mechanism in the current AI landscape. It is also under constant pressure from the optimization logic of the installation period, which structurally favors concentration and proprietary control.

III. The Fragility Vectors#

Against these robustness signals, the installation period’s fixed capital logic is pushing the ecosystem toward brittleness along several specific vectors. Each one maps precisely onto a robustness failure mode that Hamant’s framework identifies in biological systems.

Infrastructure concentration (with weak diversification signals). The compute layer that makes frontier AI possible is still highly concentrated, even as some robustness is emerging at the margins. NVIDIA remains dominant in the accelerator stack, while alternatives (TPUs, AMD accelerators, and other specialized chips) are growing but have not yet reduced systemic dependency enough to absorb major shocks. NVIDIA GPU dependency, hyperscaler concentration — Microsoft, Google, Amazon, Meta controlling the majority of training and inference capacity — a small number of facilities housing the productive capacity of the entire ecosystem. This is maximum efficiency and maximum fragility simultaneously. A geopolitical shock to NVIDIA’s supply chain is not a hypothetical: Taiwan Semiconductor Manufacturing Company manufactures the chips, and the geopolitical exposure is present tense, not future risk. A regulatory intervention on hyperscaler data centers, a physical failure at a concentrated facility, a single point of infrastructure disruption — the optimization of the compute layer creates systemic brittleness that the diversity of the model layer cannot compensate for. Hamant’s biological equivalent is a monoculture agricultural system: maximum yield under optimal conditions, catastrophic exposure to any pathogen the single variety cannot handle.

The rental model removes local adaptation. API-only access to AI capability is architecturally incompatible with robustness. Hamant’s framework requires that components respond to local conditions — that local autonomy is real and operational, that the system learns from the specific stresses of its specific environment. A knowledge worker or organization that accesses AI exclusively through a remote API cannot modify, adapt, fine-tune, or stress-test the system. They cannot develop the deep operational knowledge that comes from genuine engagement with a tool. They are passive recipients of a fixed capability, shielded from the productive friction that builds robustness — exactly as Hamant’s plants shielded from wind grow tall and fragile.

This is not only a technical architecture question; it is also a governance and capability question. A rental-only model optimizes short-term access while reducing local adaptation and increasing operational lock-in. When conditions change — pricing, policy, service terms, or geopolitical constraints — dependent users absorb the risk.

Ivan Illich named the underlying principle in Tools for Conviviality (1973): tools become counterproductive when they exceed the threshold of complexity beyond which users can no longer understand, modify, or control them — at which point the tool begins to serve the institution rather than the person. The API that processes your work but cannot be inspected, adapted, or owned by you is past the convivial threshold.

Frontier model optimization pressure. The race to build the most capable frontier model is an optimization race, not a robustness race. Benchmark performance, RLHF fine-tuning, constitutional training, reinforcement from human feedback — these mechanisms reduce the variability and noise that Hamant identifies as robustness features, in pursuit of consistent, measurable, controllable performance. The most prominent and widely deployed nodes in the AI ecosystem are being made progressively more brittle as they become more capable. The optimization is real. The fragility it produces is equally real, and less visible.

No margins in the energy layer. The energy infrastructure supporting frontier AI is running at or near capacity with demand growing faster than supply. There is no slack. Hamant is specific on this: a system operating at maximum capacity has no buffer against perturbation. The hyperscaler response — building nuclear plants, signing long-term energy contracts, acquiring renewable capacity — is an attempt to expand the ceiling, not to build margins below it. The logic remains: run as hot as possible, as fast as possible, with no reserve. This is the definition of an unrobust system at the infrastructure level. According to the IEA’s 2024 Electricity report, data center electricity consumption is projected to more than double by 2026 — a demand curve that infrastructure investment is racing to meet with no systemic slack built in. Recent efficiency jumps — including constrained-hardware training, improved compression/distillation pipelines, and context/inference optimizations — are genuine robustness signals at the model layer, but so far they are being outpaced by deployment growth at the system layer.

Homogenization of training data. The models being trained at scale are consuming the same internet. As the internet fills with AI-generated content — which it is, rapidly — the training data for the next generation of models includes increasing proportions of outputs from the previous generation. This is the cognitive equivalent of monoculture agriculture: the diversity of the training corpus is narrowing precisely as the models trained on it become more capable and more widely deployed. Diversity of training data is diversity of the ecosystem. Its erosion is a robustness signal moving in the wrong direction. Researchers have begun calling this model collapse — the degradation that occurs when models train recursively on their own outputs — and it is an emergent property of the current deployment trajectory, not a hypothetical.

IV. Cognitive Independence: The Individual and the Organization#

Scale Hamant’s local autonomy principle from the ecosystem to the human — to the knowledge worker and the organization — and the robustness argument becomes immediately practical.

At the individual level: the knowledge worker who owns AI tools they can modify, fine-tune, and adapt is a robust node. They develop genuine capability through friction with the tool. They accumulate domain-specific adaptations that represent real knowledge, not borrowed access. They maintain the cognitive independence that makes their work genuinely theirs — and genuinely portable. The knowledge worker who accesses AI exclusively through commercial APIs is a dependent node. Their capability is rented. Their adaptations belong to the platform. Their cognitive development is shaped by what the rental service makes available and what it decides to change, deprecate, or reprice.

This connects directly to the argument in Marx Was Right About AI: the leverage workers retain over AI deployment during the installation period depends on their being genuine operators of the technology, not passive consumers of a service. Ownership is not merely preferable — it is the structural condition for the leverage to exist at all. A workforce that rents its cognitive tools from the same companies deploying AI to replace it has no leverage and no robustness simultaneously. The two failures are the same failure.

The practical path toward individual cognitive independence is available now, through tools that already exist. Local models for sensitive and domain-specific work — not as a rejection of frontier capability but as a foundation that cannot be withdrawn, repriced, or modified without consent. Open tooling such as Ollama, llama.cpp, and vLLM makes this accessible without heavy infrastructure overhead. Fine-tuning on personal and professional knowledge — the worker’s accumulated expertise embodied in a model they own, not fed into a hyperscaler’s training pipeline — is increasingly accessible through platforms like HuggingFace’s fine-tuning tools and open source frameworks. Genuine engagement with AI systems — breaking them, stress-testing them, adapting them to specific contexts. Hamant’s stress-as-information principle applies directly: the friction of deep engagement is not a problem to be avoided but the mechanism through which real capability is built. The worker who has struggled with a model’s failure modes knows something the worker who has only consumed its successes does not.

At the organizational level, the robustness argument is also a risk management argument in terms that Lyn Alden’s framework makes precise. An organization whose operational capability depends on continued access to a remote API is exposed to pricing changes, service discontinuation, terms-of-service modifications, and geopolitical disruption in ways that an organization running owned infrastructure is not. The rental model transfers operational risk to the renter while keeping the productive asset — and its accumulated learning — with the owner. This is not a neutral commercial arrangement. It is a structural dependency that the installation period is building into the productive infrastructure of every organization simultaneously.

The firm that runs models it owns, fine-tuned on its own domain knowledge, adapted to its own workflows, auditable by its own technical staff — this firm is a robust node. It has genuine AI capability, not borrowed access to someone else’s. It retains proprietary knowledge rather than feeding it to a hyperscaler’s training pipeline. It can respond to perturbation — a model provider’s service disruption, a pricing change, a regulatory intervention — without losing its productive capacity. It has, in Hamant’s terms, the local autonomy that robustness requires.

V. Digital Sovereignty: The State#

The robustness argument at its largest scale is also its most politically concrete — and the most immediately actionable for governments that are willing to understand what they are currently building.

No government should run critical public infrastructure on AI systems operated and controlled by foreign corporations. This is not a nationalist argument. It is a robustness argument — and a monetary argument, in the sense that The Efficiency Trap develops. The productivity surplus generated by AI deployed in public services flows to the balance sheets of foreign corporations rather than into the domestic fiscal system. The efficiency gain is real. The monetary leakage is real. And the dependency it creates is structural, not incidental.

The dependency is not hypothetical. European governments are running significant public administration on Microsoft Azure OpenAI infrastructure. Public health systems in multiple countries are integrating Google Cloud AI into clinical workflows. Judicial systems are using tools whose weights, training data, update schedules, and operational parameters are controlled in a foreign jurisdiction, by private companies whose primary accountability is to their shareholders.

The specific robustness risks are concrete. A foreign government or its regulatory apparatus can degrade, modify, or terminate service — intentionally or as collateral damage in a geopolitical dispute. Training data and alignment fine-tuning reflect the cultural, legal, and political assumptions of the country and company that built the model — assumptions that may not be neutral with respect to the deploying state’s interests. Audit is architecturally impossible on closed systems — a government cannot verify what a black-box model is doing with sensitive public data. Pricing and terms are set unilaterally by the provider — the deploying state has no leverage over the cost of its own critical infrastructure.

This applies asymmetrically but universally. The United States demonstrably does not want its military and intelligence infrastructure dependent on Chinese models — the chip export controls and model access restrictions make that explicit. China agrees with the principle from the other direction: its domestic AI investment is explicitly a sovereignty project. Europe is the most exposed major economy: significant AI consumption, growing regulatory sophistication, and the least indigenous frontier capability. The EU AI Act is a serious regulatory effort that does not address the infrastructure dependency it is regulating around. You can regulate the use of tools you do not own. You cannot audit them, cannot modify them, and remain operationally dependent on their continued availability.

The commons is the sovereignty solution that does not require every state to build a frontier model from scratch. Open weights models are sovereign-neutral. A government that builds its public AI infrastructure on Llama, Mistral, or other open weights models can audit, modify, deploy, and control its own systems. It is not dependent on a foreign corporation’s API, pricing schedule, or terms of service. It can fine-tune on its own language, its own legal system, its own administrative context. It owns the tool.

France’s investment in Mistral is a partial sovereignty play — and notably, Mistral has been the European AI project most consistently willing to release open weights, which is not coincidental. DeepSeek is China’s sovereignty model — built indigenously, released with open weights as a strategic move that simultaneously demonstrated capability parity with Western frontier models and undermined the argument for dependency on them. Both cases illustrate the same principle: open weights are the infrastructure of independence.

The policy prescription is not autarky. It is the maintenance of the capacity for independence — the option to operate without foreign infrastructure if necessary, while participating in the global AI ecosystem by choice. Hamant’s redundancy principle applied to states: not isolation, but the maintained ability to function independently. The state that has never built the capability to operate its own AI infrastructure has no option when the dependency becomes a liability. The state that has built it — even if it currently chooses to use commercial services for cost or capability reasons — retains the leverage that sovereignty requires.

VI. The Commons as Cognitive Infrastructure#

The constructive core of the robustness argument is this: open weights models are to cognitive infrastructure what public libraries were to written knowledge. Universally accessible. Auditable. Not subject to extraction by the entity controlling access. Maintained as a common resource because the alternative — knowledge controlled by whoever can afford to own it — is incompatible with a functioning society.

This is not a rhetorical analogy. Elinor Ostrom’s Governing the Commons (1990) — for which she received the Nobel Prize in Economics — identified the specific conditions under which commons succeed rather than collapsing into Hardin’s tragedy. The conditions are precise: clearly defined boundaries, rules matched to local conditions, collective choice arrangements, monitoring mechanisms, graduated sanctions, conflict resolution, and recognition by external authorities. The open source AI ecosystem meets some of these conditions partially. It fails others entirely.

The open source AI commons currently lacks sustainable funding independent of corporate goodwill. Meta releases Llama because it currently suits Meta’s competitive interests — it undermines the moat of closed model providers while Meta retains infrastructure advantages. A commons whose existence depends on a single corporation’s strategic calculation is not a commons. It is a gift that can be withdrawn. Mistral releases open weights partly because French industrial policy creates incentives to do so. DeepSeek releases open weights as a geopolitical signal. None of these motivations are wrong — but none of them are sufficient foundations for infrastructure that societies depend on.

The commons also lacks open training data provenance. Open weights without open training data is a limited commons. The model is available. The knowledge of what it was trained on, what biases it contains, what it cannot do and why — this remains largely opaque even in open weights releases. True commons infrastructure requires both. Efforts like EleutherAI’s The Pile, Common Crawl, and the BigScience community that produced BLOOM are early signals of what open training data infrastructure looks like — but they remain underfunded relative to the scale of proprietary training runs.

Shared compute for researchers and public institutions without hyperscaler dependency is equally absent. The ability to train and fine-tune models at meaningful scale should not require a commercial cloud contract. Public research institutions, governments, civil society organizations — all currently depend on commercial infrastructure to do serious AI work. This structural dependency is what the commons model should resolve.

The institutional model that addresses all of these is not novel. CERN — the European Organization for Nuclear Research — is publicly funded fundamental research infrastructure, shared across member states, producing outputs that no single national investment could generate and whose benefits are genuinely common. Its most famous byproduct, the World Wide Web, was released without intellectual property restriction specifically because the institution recognized that the value of universal access exceeded any value that could be captured through control. An equivalent institution for AI infrastructure — publicly funded, member-state governed, producing open models, open training data, and shared compute capacity — is the institutional response that the scale of the technology warrants.

Yochai Benkler’s The Wealth of Networks (2006) provides the theoretical foundation for why this works economically, not just ethically. Commons-based peer production — the model that produced Linux, Wikipedia, and the open source software ecosystem — aggregates distributed knowledge and motivation that no single organization can capture. The open source AI ecosystem is the contemporary instantiation. Its robustness depends on treating it as infrastructure to be maintained, not a byproduct of commercial competition to be consumed while it lasts.

VII. What a Robust AI Ecosystem Looks Like#

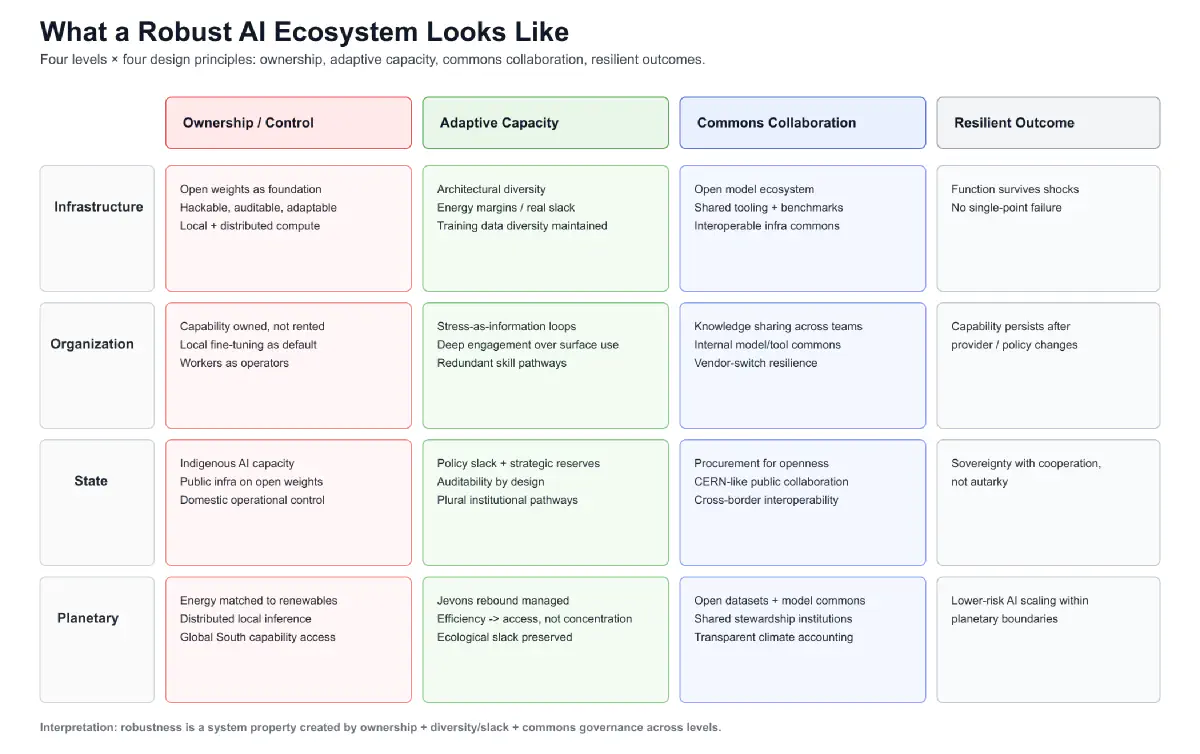

Hamant’s framework gives us the criteria for the deployment period Perez describes and political choices now determine. Not the most capable AI ecosystem. Not the most efficient. The most robust — the one most likely to maintain function across conditions that cannot currently be predicted, including conditions that would not be chosen.

At the infrastructure level, robustness looks like diverse model architectures coexisting rather than converging on a winner; open weights as the foundation layer — owned, hackable, adaptable, auditable; distributed compute matched to local renewable energy capacity rather than concentrated in hyperscaler data centers running at the margin of grid stability; energy margins — infrastructure operating below maximum capacity, with genuine slack; training data diversity actively maintained rather than passively eroded by the accumulation of AI-generated content.

At the organizational level, robustness looks like AI capability treated as something to own and develop rather than rent and consume; fine-tuning on local domain knowledge as standard practice rather than exceptional effort; workers who are genuine operators of AI tools rather than passive consumers of AI services; the productive friction of deep engagement — Hamant’s stress-as-information — building genuine organizational capability rather than surface-level adoption that evaporates when the service provider changes its terms.

At the state level, robustness looks like indigenous AI capability in every major economy — not to close borders but to maintain the option of independence; public infrastructure built on open weights rather than foreign commercial APIs; fiscal policy that routes the productivity surplus of AI back into the wage pipe — Alden’s circuit breaker as permanent architecture rather than emergency response; procurement policy that requires auditability, open weights, and domestic operational control for critical public systems.

At the planetary level, robustness looks like energy infrastructure for AI matched to renewable capacity rather than driving new fossil fuel extraction; distributed local compute reducing the energy cost of inference — the trajectory toward locally runnable models is also a trajectory toward lower total energy per unit of AI deployment; the Jevons rebound managed deliberately — efficiency gains in AI capability used to expand access rather than deepen concentration; the open model ecosystem extending to the Global South, where distributed local AI creates the conditions for wage-pipe reconstruction from below that the managed degrowth transition requires.

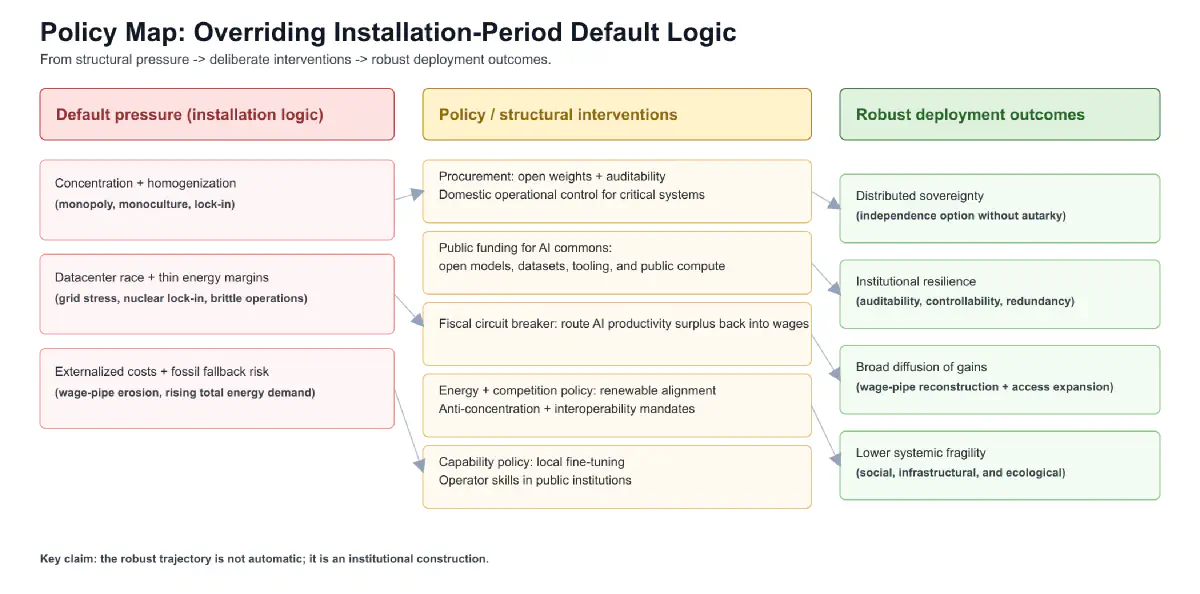

This is the scenario in which the second piece in this series ends with conditional hope rather than structural alarm. The efficiency trap is real. The doom loop is real. The externality trap is real. But they are outputs of the installation period’s default logic — and default logic can be overridden by deliberate choice. The robust ecosystem described above is not utopian. Every component of it exists in some form already. The open weights models are being released. The distributed compute trajectory is real. The digital sovereignty argument is being made in European and Asian policy circles. The CERN model is operational in physics and could be replicated for AI. What does not yet exist is the political coherence to treat these components as a system, to fund them as public infrastructure, and to defend them against the installation period’s continuous pressure toward concentration, homogenization, and extraction.

VIII. The Political Choice#

Robustness is not the default trajectory. The fixed capital logic of the installation period generates fragility automatically: concentration, homogenization, rental dependency, no margins, externalized costs. The optimization pressure does not pause to ask whether the system being optimized will survive the next perturbation. That is not a question the installation period asks. It is a question the deployment period inherits.

Choosing robustness is choosing against the grain of the productive system’s own momentum. It requires specific political acts.

Treating open source AI as public infrastructure — funding it deliberately, governing it collectively through institutions accountable to users rather than contributors, maintaining it as a commons rather than consuming it as a byproduct of commercial competition. The HuggingFace ecosystem, the open weights releases, the collaborative research infrastructure — these need public funding and public governance to be robust, not just corporate generosity and competitive strategy.

Building digital sovereignty into procurement, regulation, and industrial policy — not as protectionism but as the maintenance of the capacity for independence that every robust system requires. The state that cannot operate its own cognitive infrastructure is not sovereign in any meaningful sense. The EU AI Act regulates AI use. What Europe needs is an EU AI Infrastructure policy that addresses ownership and operational independence.

Giving individuals and organizations the technical and legal right to own, modify, and run the AI tools they depend on. The convivial threshold Illich identified fifty years ago — the point at which tools begin to serve institutions rather than people — is the threshold the rental model crosses by design. Reversing this requires both technical access (open weights, local deployment) and legal protection (the right to modify, the right to audit, the right to refuse dependency).

Routing the monetary surplus of AI productivity back into the wage pipe — Alden’s fiscal dominance as deliberate policy rather than crisis response. A robust AI ecosystem built on a collapsing demand base is still a system heading for crisis. The infrastructure robustness and the monetary robustness are not separate problems. They are the same problem at different scales.

Put plainly: VC money does not flow forever. Soon, AI companies have to generate durable revenue. When that transition hardens, subsidized token pricing ends. Token prices will be pulled toward real unit economics — compute depreciation, grid power, cooling, networking, and capital costs — not toward promotional pricing. And because energy is a dominant cost driver, token prices will increasingly track energy-market volatility and infrastructure constraints. Any institution designed around permanently cheap inference is building on a temporary subsidy, not a stable foundation.

The alternative trajectory is a cognitive infrastructure that resembles the financial system of 2008: maximally optimized, apparently stable, concentrated in a small number of nodes, running with no margins, and completely unprepared for the perturbation it cannot see coming. The financial crisis of 2008 did not happen because bankers were unusually malicious. It happened because the system had optimized away every buffer, every redundancy, every margin of safety in pursuit of efficiency — and then encountered conditions it had not been designed for. The cascade was automatic. No one chose it. The system produced it structurally.

Hamant’s plants survive the storm because they spent years being shaped by smaller storms. The AI ecosystem that survives what is coming is the one being shaped by variability, diversity, and productive friction now — not the one being optimized into brittleness in pursuit of this quarter’s benchmark score.

The installation period will be over soon. The hinge is coming — the crisis that marks the transition from installation to deployment, the moment when the political question of who benefits from the productive system’s gains becomes unavoidable. What is built before that hinge is what will be available to work with when it arrives.

The productive system will not build it. It does not build robustness. It builds efficiency. Robustness is what humans choose, deliberately, against the grain of the system’s own momentum, because they understand what the system cannot: that a future worth living in requires infrastructure designed for it.

Further Reading#

- Olivier Hamant, La Troisième Voie du Vivant (2022) — the core text; not yet translated into English, which is itself an argument for the kind of open knowledge infrastructure this piece describes. His INRAE researcher profile and public lectures are the best English-language entry point. Related quote source: RTBF interview (15 December 2023): “Les êtres vivants ne sont pas performants et robustes, ils sont robustes parce qu’ils ne sont pas performants.”

- Nassim Taleb, Antifragile (2012) — the closest English-language analogue to Hamant; robustness maintains function under disorder, antifragility gains from it; both are preferable to fragility

- Donella Meadows, Thinking in Systems (2008) — the systems dynamics foundation for understanding how feedback loops, stocks, and flows produce emergent outcomes that no individual actor intends

- Elinor Ostrom, Governing the Commons (1990) — the foundational work on when commons succeed rather than collapse; her conditions apply directly to open source AI infrastructure

- Yochai Benkler, The Wealth of Networks (2006) — the theoretical and empirical case for commons-based peer production; open source AI is its contemporary instantiation

- Ivan Illich, Tools for Conviviality (1973) — tools that users can understand, modify, and control versus tools that create dependency; the rental model versus ownership argument, made fifty years before it became urgent

- Carlota Perez, Technological Revolutions and Financial Capital (2002) — the installation and deployment period framework; the robustness infrastructure built now determines the deployment period’s distribution

- Donella Meadows et al., The Limits to Growth (1972) — the planetary boundary argument that the deployment period must reckon with; the standard run scenario has tracked actual data with uncomfortable accuracy for fifty years

- Karl Marx, Fragment on Machines (Grundrisse, 1858) — the general intellect argument; the fixed capital system that Hamant’s robustness framework must contend with

- Herman Daly, Beyond Growth (1996) — the economic argument that GDP accounting is structurally blind to natural capital depletion; essential background for the planetary section

- Lyn Alden, Broken Money (2023) — the monetary framework that connects infrastructure robustness to demand maintenance; read alongside The Efficiency Trap

- William Stanley Jevons, Jevons Paradox (The Coal Question, 1865) — the rebound effect that makes cheap AI more disruptive, not less; and the second direction in which it cuts

Comments (Mastodon)

Comment on Mastodon