Marx Was Right About AI

This is the first piece in a series. The second, The Efficiency Trap, examines what happens to the monetary system when the displacement described here runs to completion. The third, The Robustness Imperative, asks what kind of AI infrastructure serves workers, organizations, and states — rather than extracting from them.

For two centuries, the story of automation has followed a simple script. Capital invests in machines. Workers are displaced. The productivity gain flows upward. Repeat.

It worked because the machines didn’t need the workers’ permission. Ford’s assembly line didn’t require the goodwill of the workers it deskilled. The internet didn’t ask travel agents whether they consented to being replaced by booking platforms. Capital deployed the technology unilaterally. Workers could resist or accept — the machine ran either way.

But before we get to where AI breaks this script, it is worth understanding why the script ran so reliably. The answer is not simply that capital was powerful and workers were weak. It is that the productive system — the accumulated fixed capital of factories, infrastructure, and technology — developed its own logic, independent of any individual capitalist’s intentions. A firm that did not adopt the assembly line was undercut by one that did. A travel agency that did not move online lost customers to one that had. The technology did not spread because managers chose it. It spread because the productive system compelled adoption, firm by firm, sector by sector, regardless of the human consequences. No villain was required. The system produced the outcome structurally.

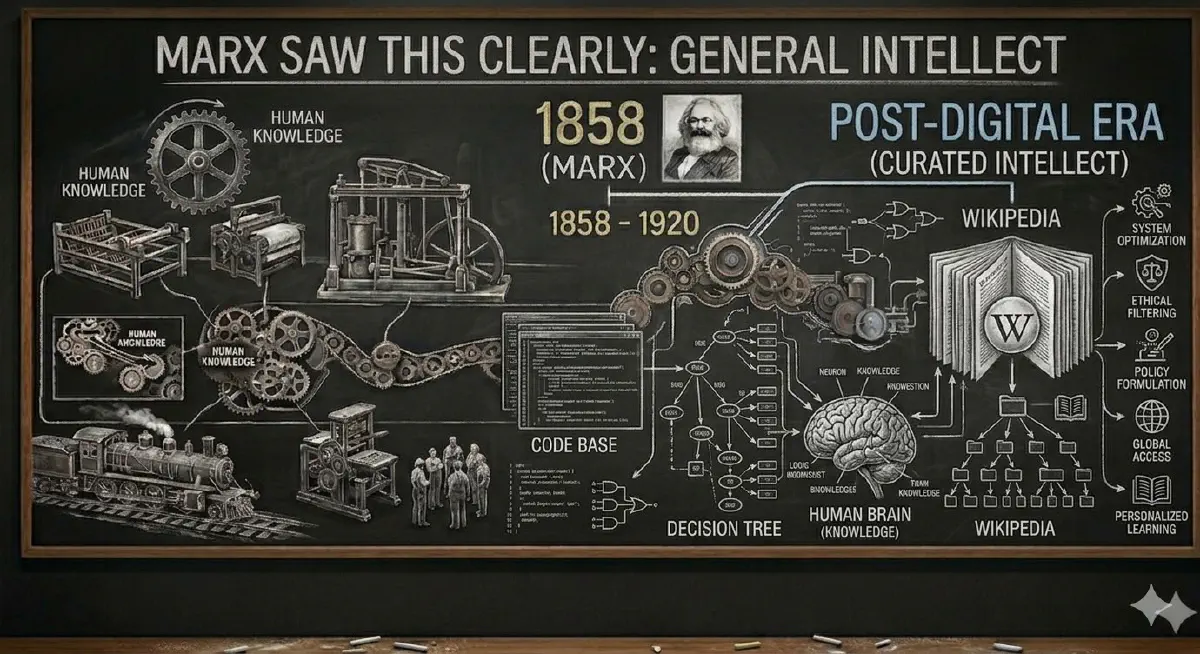

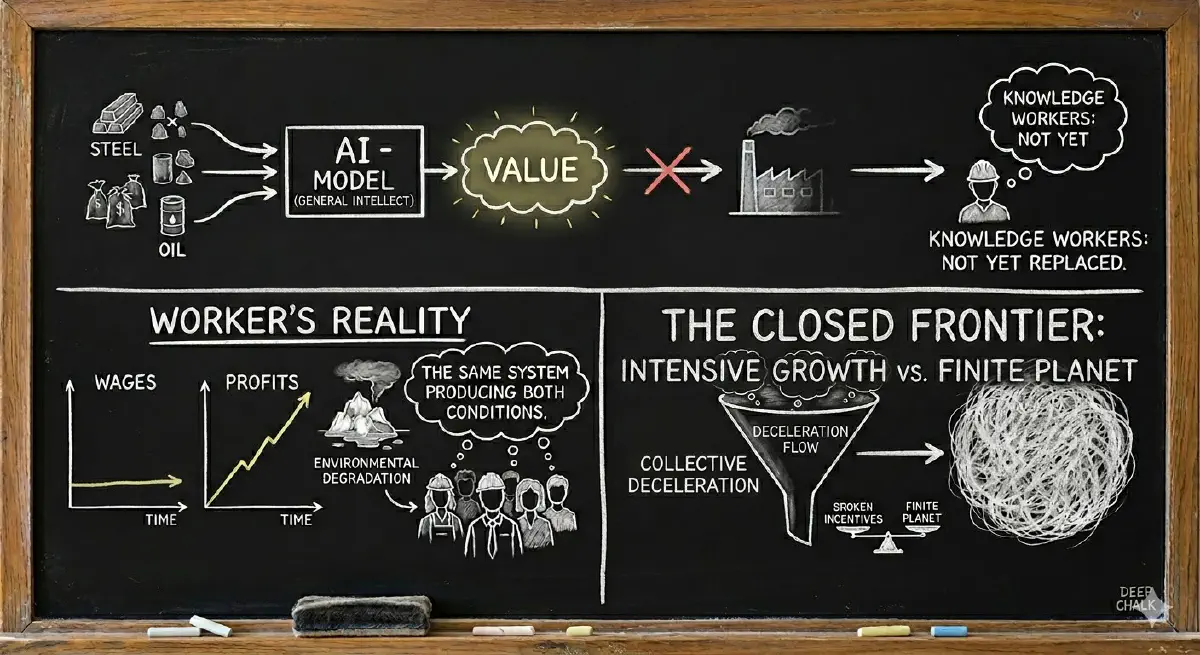

Marx saw this clearly. In the Grundrisse, he described what he called the general intellect — the point at which accumulated human knowledge becomes embodied in machines and infrastructure, and begins to operate as an autonomous productive force. The knowledge is no longer in the workers. It is in the fixed capital. And once it is there, the workers who contributed it become, in the system’s logic, redundant inputs to be minimized.

AI is the most complete instantiation of the general intellect in history. Every text, every image, every line of code, every recorded decision ever produced by human beings has been ingested, compressed, and embodied in models that now operate as fixed capital. The general intellect is no longer metaphorical. It is running on servers, and it is being deployed by the same compulsive logic that deployed every previous wave of automation: not because any individual firm chose it freely, but because the productive system leaves no firm the option of not choosing it.

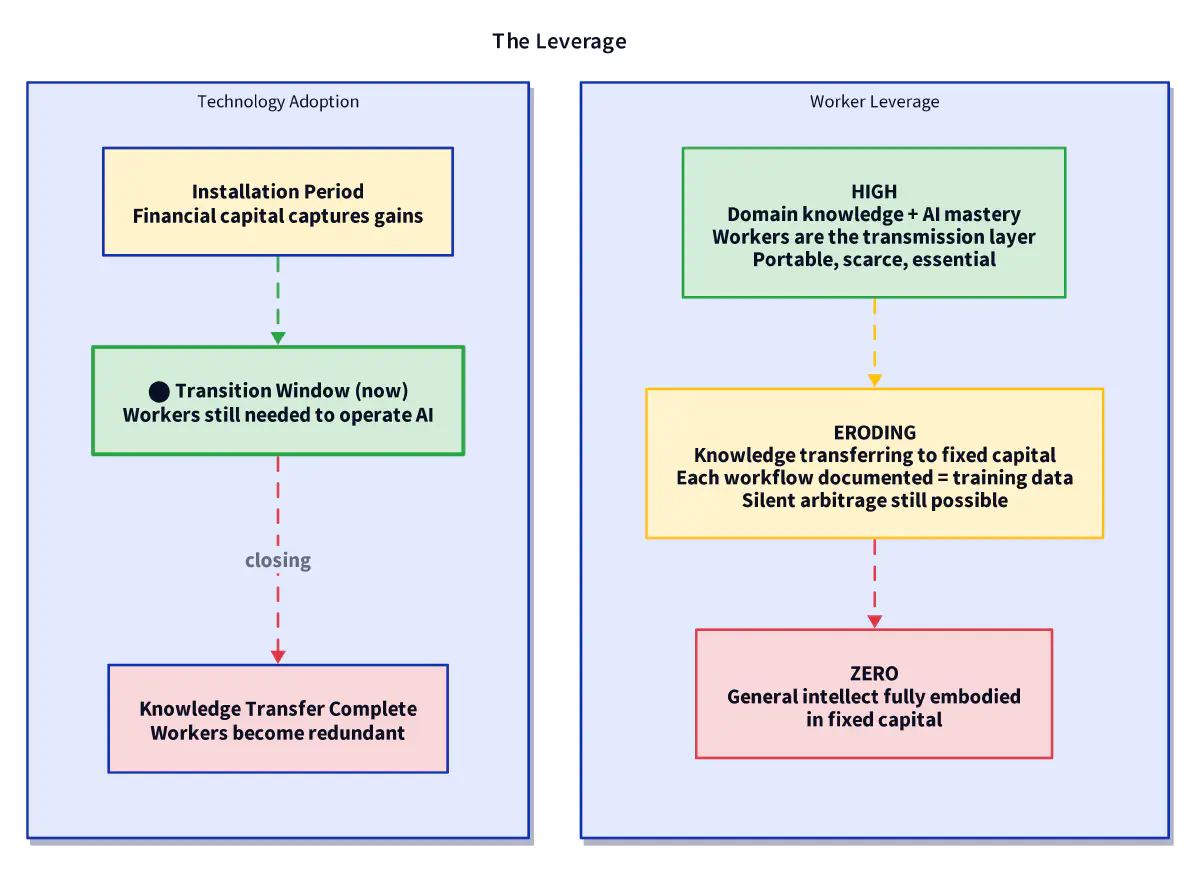

This is the baseline. It is important to hold it clearly, because the argument that follows is not that the compulsion has stopped. It is that, for the first time, the compulsion has created a structural condition that workers can use — if they move before the window closes.

The Machine Needs You — For Now#

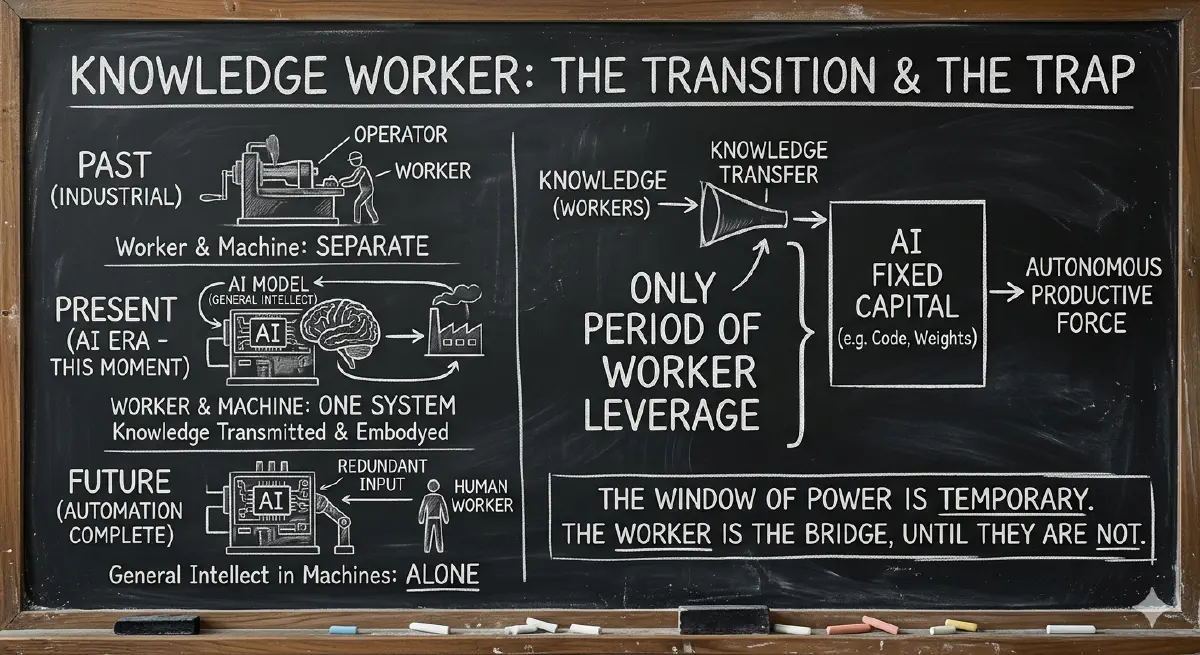

An AI model on a server produces nothing useful by itself. It is like a building that cannot stand yet—it needs knowledge workers to act as the scaffolding.

Right now, the human and the machine are locked together. You cannot have the building without the support beams. but this is only for a moment. Every time a developer fixes a bug or an analyst validates an output, they are teaching the building how to stand on its own. The ‘General Intellect’ is being moved from the worker’s brain into the machine’s code. Once the building is finished, the scaffolding is no longer a partner—it is just an obstacle to be removed."

We are in that transition period now. The general intellect is still being assembled. The models still require human cooperation to become organizationally productive. That cooperation is not a permanent condition. It is a window.

Managers are in an identical position, one layer up. Their function — synthesizing information, allocating resources, translating strategy into execution, coordinating across organizational boundaries — is precisely what AI agents are being positioned to automate. But deploying that automation requires restructuring organizations, redefining workflows, and taking accountability for outcomes that the model cannot take. That work flows through managers. They are being asked to enthusiastically build the apparatus that eliminates their own role.

In every prior automation wave, managers could advocate for displacing the workers below them — it increased their span of control and their apparent value to the firm. AI inverts this entirely. The further up the knowledge hierarchy you go, the more structurally threatened you are. The analyst, the project manager, the department head, the regional director — every layer of cognitive coordination is in the crosshairs simultaneously, globally, across every sector and every industry.

For the first time, workers and their managers share the same structural interest. The coalition of the threatened spans the entire organizational chart. Capital has never faced this configuration before — and it arrived precisely because the fixed capital system, in its compulsion to automate everything, automated the reabsorption layer that previous automation waves left intact.

The Signal Was Sent — And Received#

Capital had one job during the transition period: keep its knowledge workers motivated enough to voluntarily deliver the AI productivity gain. Instead, between 2022 and 2026, it did the opposite — and did it publicly, as a demonstration.

According to layoffs.fyi, over 600,000 tech workers were laid off in 2022 and 2023 alone, continuing through 2024 and 2025. These were not distressed companies cutting costs to survive. Meta had $3.9 million in earnings for every employee it laid off. Microsoft had $9.8 million. Meta’s stock surged 19% after its cuts were announced. The layoffs were a demonstration of power — a public reassertion that productivity gains belong to shareholders, not workers. The message was sent clearly and at scale.

Every surviving knowledge worker received it: your extra effort will be captured as a new baseline, not rewarded. Your loyalty offers no protection. The gains of your productivity will not flow to you.

This is not a new dynamic. It is the installation period’s characteristic behavior, visible in every previous technological revolution. Carlota Perez, in Technological Revolutions and Financial Capital (2002), describes how the installation period of each major technology wave is driven by financial capital extracting maximum gains before any institutional framework exists to distribute them. The railway mania of the 1840s, the electrification wave of the 1890s, the dot-com boom of the 1990s — each followed the same pattern: financial capital captures the gains, workers absorb the disruption, the institutional framework that eventually distributes the benefits has to be fought for, against the system’s default preference to maintain the extraction.

The layoffs of 2022–2025 are the installation period behaving exactly as Perez predicts. They are not a mistake. They are the logic of the system operating as designed.

The rational response from workers is what the media condescendingly labeled “quiet quitting” — precise compliance with contractual obligations, nothing more. Gallup’s 2023 State of the Global Workplace report found that 59% of the global workforce was doing exactly this — not engaged, doing the minimum, psychologically disconnected. This was reframed in the media as a moral failing of workers, a laziness epidemic, a generational pathology. That framing was ideological cover. What Gallup actually measured was a rational collective adjustment to a broken incentive structure.

Work-to-rule — doing exactly your job and nothing more — has been a union tactic for a century. Quiet quitting is work-to-rule without the union.

Now add AI to this picture. A quietly quitting knowledge worker with AI assistance can appear to perform at exactly the expected level while expending a fraction of the cognitive effort. The surplus is absorbed silently — into rest, into personal projects, into simply living. Management cannot easily see it. The productivity gain evaporates before it reaches the balance sheet.

This is not laziness. This is variable capital — the worker’s labor power — reaping the benefit that fixed capital was supposed to capture for the firm. The firm invested in AI to increase the rate of surplus extraction from labor. The worker, by absorbing the productivity gain individually, inverts the extraction. The fixed capital investment produces surplus. The surplus flows to the worker rather than the firm. The general intellect has been turned, quietly and individually, against the system that assembled it.

Capital has handed workers the perfect tool for invisible resistance, then destroyed their incentive to do anything else with it.

The New Union That Needs No Union#

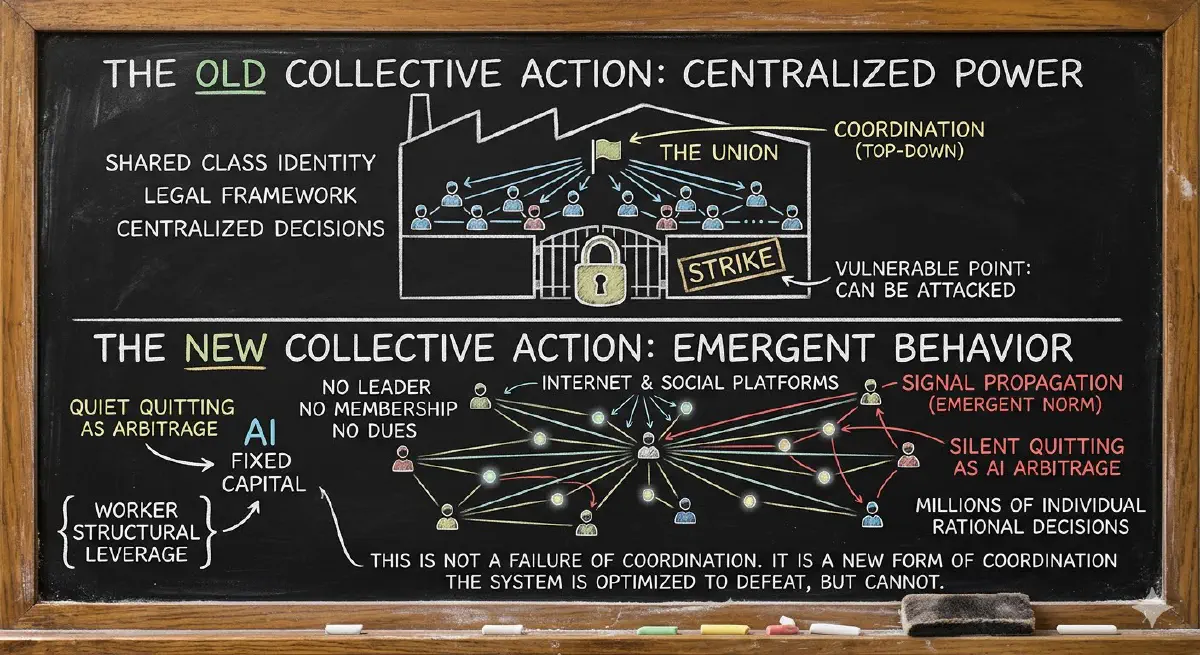

The historical union solved a collective action problem. Individual resistance to capital was suicidal — a single worker who refused to cooperate could be replaced immediately. Collective resistance required coordination: physical proximity on the factory floor, organizational structure, legal recognition fought for over decades, shared class identity built through struggle. The union was effective precisely because it centralized the collective action. And it was vulnerable for exactly the same reason — it could be attacked, infiltrated, legally constrained, and eventually captured.

Silent quitting as AI arbitrage is something structurally different. It solves the same collective action problem without any of the coordination costs — and without any of the vulnerabilities.

The coordination happens through the internet, horizontally, asynchronously, and without any central structure. Reddit threads, LinkedIn posts, Discord servers, Substack newsletters where workers share strategies, normalize the behavior, and establish that it is widespread. No leader. No membership. No dues. No moment of collective commitment that can be identified and attacked. The norm spreads through local adoption and signal propagation — each worker who reads about the practice and adopts it strengthens the norm for the next worker, without anyone organizing the process.

The Gallup data did not measure a coordinated movement. It measured the emergent collective outcome of millions of individual rational decisions that were never coordinated by any organization. That is not a failure of coordination. That is a new form of coordination — one that the productive system, optimized to defeat hierarchical labor organization, has no established mechanism to counter.

The personal choice dimension matters politically. The union carried class-war connotations that made it easy to frame as an attack on the firm, on productivity, on the social good. Silent arbitrage operates entirely within the existing framework of employment contracts. The worker is meeting their obligations. What happens to the efficiency gains of the tools used during working hours is not, in most employment contracts, specified. The surplus is not withheld. It is redirected — before it reaches the balance sheet, invisibly, individually, and legally.

There is an honest tension to hold here. Silent arbitrage is effective at the individual level and collectively suboptimal at the systemic level. It does not build political power. It does not address the workers whose jobs are replaced entirely rather than made faster — the ones outside the leverage window who cannot arbitrage because there is no job left to arbitrage from. It does not generate the political force needed for the fiscal redistribution that the monetary system will require when the demand loop breaks.

The union, at its best, did more than protect individual workers. It built the political power that forced the deployment period — it was part of the mechanism that produced the post-war settlement, the institutional framework that distributed the benefits of the mass production revolution broadly enough to sustain fifty years of shared growth. Silent arbitrage does not do this. It is the survival strategy for workers inside the leverage window during the installation period. The deployment period still requires political coordination — but coordination that, like silent arbitrage itself, can now happen without hierarchical organization. That is the subject of a fourth piece in this series (forthcoming).

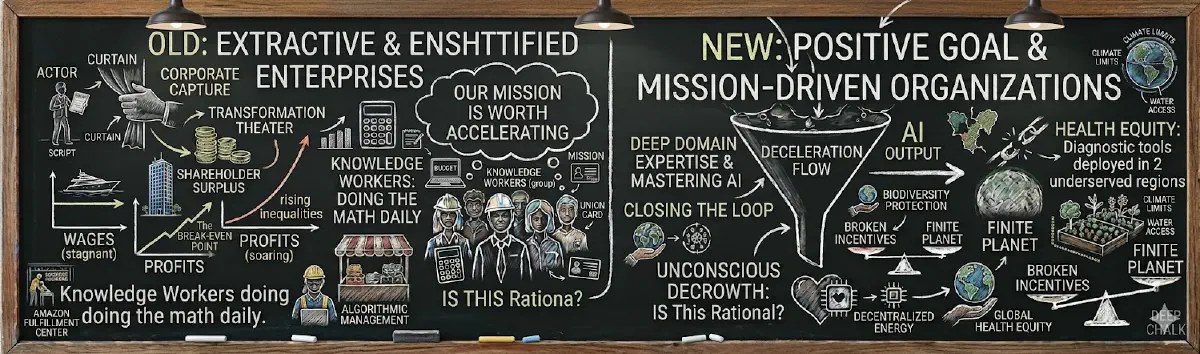

The Enshittification of the Machine#

This is unfolding inside organizations that are, at scale, progressively less capable of perceiving it.

Cory Doctorow’s concept of “enshittification” describes how platforms systematically degrade — first serving users, then extracting from them, then extracting from everyone to serve shareholders until the platform collapses under its own cynicism. The mechanism applies equally to large organizations under shareholder pressure. Management layers multiply. Reporting, compliance, internal politics, and performative productivity theater consume an ever-larger share of cognitive labor. The ratio of value-extracting activity to value-creating activity grows until the organization’s primary function is its own reproduction.

AI was supposed to cut through this. Instead, large organizations have responded by creating AI governance teams, AI transformation offices, AI ethics committees — new bureaucratic structures to manage the technology that was supposed to reduce bureaucratic overhead. The workers and managers inside these organizations are operating in an environment where the incentive to withhold is high, the tools to conceal are readily available, and the organizational capacity to detect either has been hollowed out by the same enshittification process.

The deadlock is invisible from above. Senior leadership reads dashboards and hears what their middle management — itself quietly quitting, itself doing the AI arbitrage — chooses to report upward. The fixed capital system compelled the AI investment. The broken incentive structure ensures the investment produces theater rather than transformation. The general intellect sits on the servers. The organization cannot access it because it has spent years demonstrating to the people who hold the keys that the keys will be used against them.

The Closed Frontier#

There is one more dimension that elevates this beyond a labor story — and connects it to the two pieces that follow in this series.

Every prior crisis of capitalism was resolved by expansion. New markets, new geographies, new labor pools absorbed the contradictions and reset the cycle. The productive system, compelled by its own logic to grow, found new frontiers when old ones were exhausted. That option is closing.

The Limits to Growth — the 1972 Meadows et al. report that modeled the interaction between exponential growth and finite planetary systems — predicted this collision with uncomfortable precision. The standard run scenario, which assumed no major changes to historical growth patterns, projected overshoot and decline in the early twenty-first century. Subsequent analysis tracking the report’s predictions against actual data has found the standard run scenario performing disturbingly well. We have already exceeded six of nine planetary boundaries — climate, biodiversity loss, freshwater use, land systems change, and others. A 3% annual growth rate doubles the global economy every 24 years. There is no physical substrate for that on a finite planet.

Capital’s entire bet on AI is a bet that intensive growth — extracting more value from the same or fewer material inputs — can permanently substitute for physical expansion. The general intellect, embodied in models rather than steel and oil, is the first fixed capital system that appears to have no physical frontier. It is betting that knowledge workers will deliver this enthusiastically, in exchange for the privilege of not yet being replaced.

But those workers have already received the full accounting. They have seen wages stagnate while profits hit records. They have watched the physical world degrade within their own lifetimes. They have calculated that the system they are being asked to accelerate is the same system that produced both conditions.

At the aggregate level, their collective deceleration — millions of individual rational decisions to absorb surplus quietly, to stop going above and beyond, to simply live more — constitutes an unconscious degrowth. Not a political program. Not a collective decision. The arithmetic of broken incentives meeting a finite planet, producing an outcome no one designed and everyone is living inside.

Perez’s deployment period, if it comes, will have to reckon with this. The institutional framework that distributes AI’s benefits broadly cannot be built on the assumption of unlimited physical growth. The post-war settlement was built on cheap energy, expanding resource extraction, and geographic expansion into new markets. None of those conditions are available at the same scale. The deployment period for AI has to be a different kind of settlement — one that distributes the gains of intensive growth within planetary limits rather than exporting the costs of extensive growth onto the future. What that redistribution mechanism looks like, and how the monetary system needs to be restructured to support it, is the subject of The Efficiency Trap.

The Prediction: Mission-Driven Orgs Win#

This leads to a concrete, falsifiable prediction that runs against current conventional wisdom.

The organizations that will capture the most value from AI over the next decade are not the largest ones, not the ones with the biggest AI budgets, and not the ones running the most aggressive efficiency programs. They are the ones with the highest morale and the clearest mission — specifically, missions that workers recognize as genuinely worth accelerating.

A climate tech startup working on grid-scale battery storage, a healthcare nonprofit deploying diagnostic tools in underserved regions, an open source community building tools that are genuinely free and public — these organizations have something that no shareholder-driven enterprise can easily manufacture: workers who want the productivity gain to land. Who will voluntarily close the loop between AI output and organizational value, because the organizational value is something they believe in.

Compare this to a large enshittified enterprise — a legacy financial institution running AI transformation theater, a platform company deploying AI to extract more from already-exhausted users, a consulting firm billing AI-assisted work at pre-AI rates. These organizations have AI budgets, AI strategies, AI leadership. What they do not have is the social contract that turns AI capability into AI productivity. Their knowledge workers are doing the math every day, and keeping the surplus.

There is a second mechanism compounding this gap. A knowledge worker who has mastered both their business domain and AI tooling is, for the first time in the history of knowledge work, genuinely portable. The institutional knowledge that organizations relied on to keep workers sticky — the context, the relationships, the tacit understanding of how things actually work — can now be reconstructed at a new employer in weeks rather than years. Switching costs, historically the organization’s greatest retention tool, have collapsed asymmetrically. It costs the worker little to leave. It costs the organization enormously to replace them.

The highest-leverage knowledge workers — those combining deep domain expertise with genuine AI mastery — are now free agents in a way no previous generation has been. And free agents choose. They will not choose the enshittified org with the impressive AI strategy deck and the quiet layoff rumors. They will choose organizations that offer something the large extractive enterprise structurally cannot: a real mission, real autonomy, and a genuine share of the value they help create.

The irony is precise. The organizations best positioned to attract and retain these workers are disproportionately the ones oriented toward the problems that shareholder capitalism created and failed to solve — planetary limits, health equity, open knowledge, infrastructure that serves people rather than extracting from them. They win the AI productivity race not because they have more resources, but because they have rebuilt the social contract that makes productivity possible.

This is not an argument for virtue. It is an argument for organizational coherence. When the mission is real and the autonomy is genuine, the best workers arrive, stay, and bring everything they have. When the mission is a slide deck, the best workers leave — and take everything with them.

The Closing Challenge#

Capital is in possession of the most powerful instantiation of the general intellect in human history and is structurally unable to capture its full potential — because that capture requires the enthusiastic cooperation of the people it has spent years demonstrating it does not trust.

The assembly line worker had no leverage over the machine. The travel agent had no leverage over the internet. The productive system deployed the technology and the workers adapted or were expelled. You have leverage over AI deployment — at every level of your organization, right now — that no previous generation of workers threatened by automation has ever had. Not because the system is weaker. Because the knowledge transfer is not yet complete, and the system still needs you to complete it.

That window does not stay open. The general intellect is being assembled. Every workflow you document, every output you validate, every process you restructure around AI is training data for the system that will eventually not need you to do it. The leverage is real and it is temporary. The question is what gets done with it before it closes.

Every day you choose which organization receives the full benefit of your cooperation is a day you are voting with the only currency that currently matters in the AI economy: your skilled, motivated, genuinely engaged attention — combined with the domain knowledge and AI mastery that no organization can lock down while you still hold it. Direct it toward organizations that are building something worth building, that share gains rather than hoard them, that are working within the limits of the planet rather than against them.

Capital has not yet understood what it is dealing with. The organizations that do — the ones that build trust, share the gains, offer real autonomy, and point their AI capacity at problems worth solving — will inherit the productivity windfall that everyone else is busy failing to capture.

The rest will keep scheduling AI transformation workshops and wondering why the dashboards don’t move.

Further Reading#

- Karl Marx, Fragment on Machines (Grundrisse, 1858) — the original statement of the general intellect, written 170 years before it became literally true

- Harry Braverman, Labor and Monopoly Capital (1974) — how capitalism systematically deskills labor to control it; the baseline this moment is breaking from

- Carlota Perez, Technological Revolutions and Financial Capital (2002) — the installation and deployment period framework; why the current extraction is the pattern, not an exception, and what historically determines who benefits from the deployment period

- Donella Meadows et al., The Limits to Growth (1972) — the foundational systems model of planetary boundaries; the standard run scenario has tracked actual data with uncomfortable accuracy

- Cory Doctorow, Enshittification: Why Everything Suddenly Got Worse (2025)

- Gallup, State of the Global Workplace 2023 — read as a measurement of rational collective adjustment, not moral failure

- Daron Acemoglu & Simon Johnson, Power and Progress (2023) — who historically captures the gains from transformative technology, and why workers benefiting is never the automatic outcome

- Jason Hickel, Less Is More: How Degrowth Will Save the World (2020) — the planetary boundary argument in full

- Lyn Alden, Broken Money (2023) — the monetary consequences of what happens when the leverage window closes; read alongside The Efficiency Trap

Comments (Mastodon)

Comment on Mastodon